Artificial intelligence is often described as a force that will replace jobs, disrupt industries, and change society in unpredictable ways. These concerns are understandable. Yet history shows a consistent pattern: powerful tools transform work, but they do not eliminate human value.

AI is not inherently good or bad. Like previous technologies, its impact depends on how people design, manage, and use it. The key question is not whether AI will replace humans, but how humans choose to use AI.

Technology Has Always Changed the Nature of Work

Every major technological shift has raised similar concerns.

-

The printing press automated the copying of texts, but it created new roles in publishing, education, and journalism.

-

The steam engine transformed manufacturing and transportation, but it also created new industries and technical professions.

-

Electricity improved productivity in factories and homes, leading to new jobs in engineering and infrastructure.

-

Computers and the internet automated calculations and communication, yet they also generated entirely new sectors such as software development, cybersecurity, and digital marketing.

In each case, tools did not eliminate human contribution. They changed the type of work people performed. Routine and repetitive tasks were automated, while human roles shifted toward decision-making, creativity, and problem-solving.

AI follows the same pattern.

What Makes AI Different?

AI systems, especially Large Language Models (LLMs) and advanced machine learning models, can perform tasks that were once considered uniquely human. These include:

-

Writing reports

-

Generating code

-

Analyzing large datasets

-

Recognizing images and speech

-

Supporting customer interactions

This creates the impression that AI can replace knowledge workers entirely. However, AI systems operate based on patterns in data. They do not possess true understanding, intention, or accountability.

AI produces outputs based on probability and training data. Humans interpret context, apply ethics, and take responsibility for decisions. This distinction is critical.

AI can assist. Humans must lead.

AI as Augmentation, Not Substitution

A useful way to think about AI is as an augmentation tool rather than a replacement.

For example:

-

A doctor may use AI to analyze medical images, but the final diagnosis and patient communication remain human responsibilities.

-

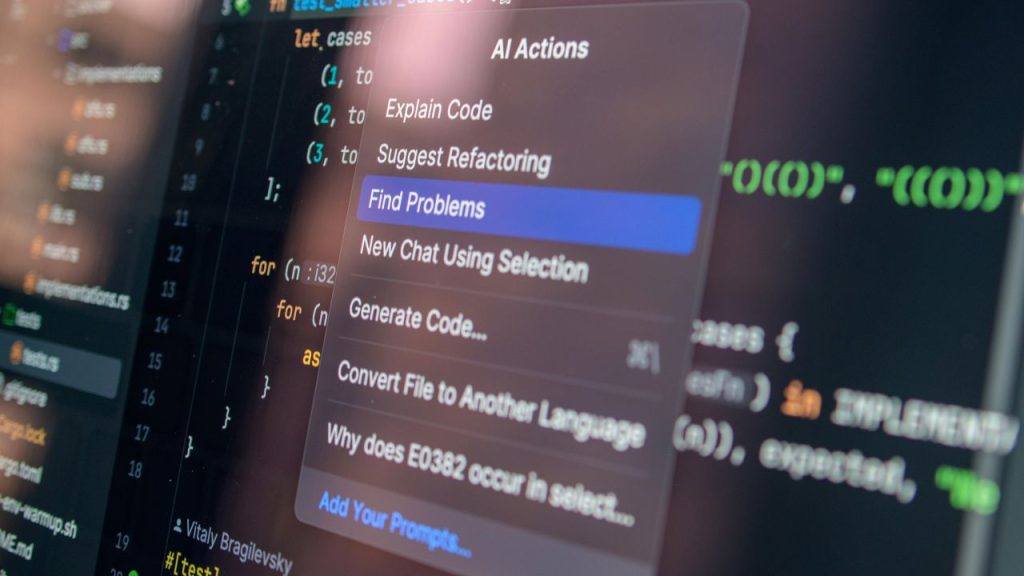

A software engineer may use AI to generate code snippets, but architectural design and validation require expertise.

-

A financial analyst may rely on predictive models, but risk assessment and strategic planning involve judgment.

In these cases, AI increases speed and efficiency. It reduces repetitive workload. It supports professionals in focusing on higher-value tasks.

This model is often called human-in-the-loop AI, where AI assists but does not act independently without oversight.

Why AI Is Not Inherently Good or Bad?

Technology itself is neutral. Its impact depends on human intention.

A simple tool such as a hammer can build a house or cause harm. The tool is not responsible; the user is.

The same applies to AI:

-

AI can improve healthcare diagnostics.

-

AI can optimize energy usage and reduce waste.

-

AI can enhance accessibility for people with disabilities.

-

AI can also be misused for misinformation, surveillance, or biased decision-making.

The ethical outcome depends on:

-

The data used to train the system

-

The objectives defined by developers

-

The governance policies of organizations

-

The regulations established by governments

AI reflects the values and priorities of its creators and users.

Addressing the Fear of Job Loss

One of the main reasons for resistance to AI is fear of job displacement. This concern should not be dismissed. Automation can disrupt certain roles, especially repetitive or rule-based tasks.

However, historical data shows that technological revolutions tend to:

-

Eliminate some tasks

-

Transform many roles

-

Create new types of employment

For example, when ATMs were introduced, many predicted the disappearance of bank tellers. Instead, the role evolved. Tellers shifted from basic cash handling to customer service and financial advising.

Similarly, AI may automate data entry or routine reporting, but it increases demand for:

-

AI oversight specialists

-

Data governance experts

-

Prompt engineers

-

AI system auditors

-

Ethical compliance officers

The workforce does not disappear. It adapts.

The key challenge is not preventing AI adoption, but ensuring reskilling and education keep pace with technological change.

Ethical Responsibilities in AI Deployment

Using AI responsibly requires structured governance. Organizations must address several ethical dimensions.

1. Transparency

Users should understand when they are interacting with AI. Clear disclosure builds trust and accountability.

2. Fairness and Bias Control

AI models trained on biased data can produce biased outcomes. Regular auditing and diverse datasets are necessary to reduce this risk.

3. Data Privacy

AI systems often process large volumes of personal or sensitive information. Strong data protection policies are essential.

4. Accountability

Clear responsibility must be assigned. Even when AI systems make automated decisions, humans and organizations remain accountable.

Ethical AI is not optional. It is a requirement for sustainable adoption.

Avoiding Overdependence on AI

While AI offers efficiency, overreliance can create new risks.

Examples include:

-

Accepting AI-generated content without verification

-

Relying on automated decisions without human review

-

Reducing critical thinking due to convenience

To avoid dependency:

-

Use AI as a first draft generator, not a final authority.

-

Validate outputs with domain expertise.

-

Maintain human review processes.

-

Encourage continuous learning rather than passive automation.

AI should support cognitive work, not replace analytical thinking.

The Responsibility for Ethical AI Is Ours

AI is neither a threat nor a solution by itself. It is a tool — powerful, scalable, and efficient. Like every major technological advancement before it, AI changes how we work but does not eliminate the need for human intelligence.

The real question is not whether AI will replace us. It is whether we will use it responsibly.

Human intention defines the outcome. If guided by ethics, transparency, and education, AI becomes a tool that enhances productivity and supports progress. If used carelessly, it creates risk.

The future of work will not be defined by machines replacing people. It will be defined by how effectively people use intelligent tools to build a more efficient, fair, and productive society.

At Kotwel, ethical AI is built into every solution we deliver. We design and deploy AI systems that are transparent, secure, and aligned with human values. Our team supports organizations in designing AI solutions with strong governance frameworks, bias evaluation processes, and human-in-the-loop controls. By combining technical expertise with ethical oversight, we help businesses use AI as a tool for progress—without compromising trust or integrity.

Frequently Asked Questions

You might be interested in:

Overview Many people are surprised to know that Vietnam is a hotbed of outsourcing companies, based on an immensely skilled workforce. The reason for this is that the country’s proximity to China, combined with its relatively cheaper and English-speaking workforce, have made it easy […]

Read MoreWhat is Data Annotation? Data annotation in machine learning is the process of labeling the data, accompanied with notes on how it should be used. It is often done so that there can be an understanding of what information this particular data has related […]

Read MoreOverview The number of global markets for mobile games is increasing every year, and there are plenty of opportunities for games to be made available outside their local territories. Globalization of the games industry is also boosting the worldwide competitiveness of mobile games. But […]

Read More